Introduction

Sequential data modeling lies at the intersection of mathematics, statistics, and probability. The question to address is: if a system may occupy one of several discrete “states” during an ordered sequence of time steps, can we predict future patterns of the states that the system will occupy given the history of the system? Further, if those states have associated with them a measurable real-valued magnitude, can the magnitudes of those predicted future states also be predicted? Applications of such modeling are as varied as predicting a sequence of words and punctuation given an input sequence of words and punctuation; predicting the future state and magnitude value of a financial instrument given its history; a robot navigating a complex motion where multiple joints must traverse a sequence of positions in a particular order; or predicting a most fuel-efficient delivery route.

Markov Chains

Modern sequential data modeling first appeared in the form of what we now call the “Markov Chain” in 1906 when its progenitor for whom it’s named, Andrey Markov, published his first paper on the topic. A Markov Chain is a sequence of random variables x_1, x_2,\ldots for which, for any time step t, the distribution of x_t given all previous x_i\text{'}s depends only on the most recent value x_{t-1} (as defined by Gelman, Carlin, Stern, Dunson, Vehtari, and Rubin in “Bayesian Data Analysis Third Edition”). The property that the state of the system during for any particular depends only on the state of the system during the prior time step is the denoted the “Markov Property” and is a foundational assumption for Markov Chains.

Given the Markov Property, the evolution of such a system that navigates a finite set of discrete states can be measured by observing the state transition of the system and tracking the counts of how often the system transitions from one state to another. Subsequently, a matrix of probability values corresponding to state transitions can be computed.

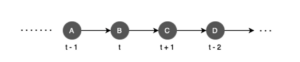

For example, suppose that a system may occupy states A, B, C, D during time steps

Supposed then that we choose to collect some data on the behavior of this system and observe two state transitions: state A transitions to state B and state B transitions back to state A. The data points could look like:

{

[A, B],

[B, A],

}

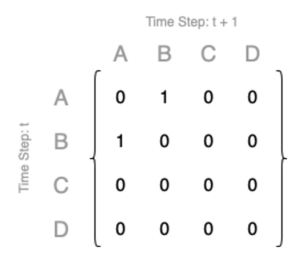

We could populate a count matrix reflecting our observations like this:

Then we could normalize such counts and compute from this a corresponding matrix of state transition probability values modeling the evolution of the system being observed.

Markov Decision Processes and Neural Networks in Decision Sciences

If in a Markov Chain like the one just briefly described the evolution of a system is modeled from the perspective of a passive observer, then the “Markov Decision Process” brings a sense of proactive action and purpose to such systems. Introduced in 1950s, the Markov Decision Process generalizes the Markov Chain to include a set of available actions that may be taken to affect the state transition of the system for the purpose of maximizing some future reward.

Instead of passively observing and modeling the behavior of such a system, we assume that the “environment” (the finite, discrete set of states that the system may occupy at any given time step) returns some real-valued numerical “reward” at each time step and that an “agent” may select amongst some set of “actions” for the purpose of affecting the state transition with the explicit intention of maximizing the cumulative future reward. As such, the Markov Decision Process brings a spirit of rigorous stochastics to optimal decision-making problems.

Still assumed in the framework of the Markov Decision Process is the Markov Property that only the present state of the system may be used in the action-selection process. The history of how the system arrived at its present state is assumed to be irrelevant.

Also, as a generalization of the Markov Chain, the Markov Decision Process reduces to a Markov Chain in the particular scenario that all states of an environment return zero reward and the only action available is a null action.

The Markov Decision Process is also the framework of modern reinforcement learning where the system may be too complex to fully model and algorithms that balance exploration of the system with maximizing reward generation from the environment. Modern reinforcement learning frequently encounters problems where environments break the Markov Property and state histories are highly relevant to the action selection.

There are several method by which reinforcement learning methods attempt to use some state history. In one, states of a system are augmented with additional metadata containing some state history. Other methods use various flavors of artificial neural networks to implicitly store some history of a system. Recurrent neural networks (RNNs) and Long Short-Term Memory (LSTM) neural networks use hidden states to summarize the past and the transformers that have brought us Large Language Models (LLMs) have their “attention” mechanism to model the manner in which one state affects another over long sequences. Though some successes have been made in modeling non-Markovian systems via neural network-based approaches–especially the transformer–they are still quite computationally expensive to train with any reliability.

There is also presently robust research into “reward machines” using automata-based learning (algorithms that factorize a problem into a Markovian transition model and a non-Markovian reward model) or “Linear Temporal Logic” (LTL) where agents can learn exact orders of actions.

A general challenge of all such approaches is the exponential expansion of the state space as more state history is considered. RNNs and LSTMs do not explicitly expand the state space to cover more history, but rather create a hidden state space that must be optimized with expensive computational training. Transformers are arguably the most successful at modeling non-Markovian systems, but still suffer from a quadratic computational complexity relative to their history.

Our Proposal

Our patented methodology is a comparatively simple approach to modeling sequential data problems in a manner that circumvents issues of computational complexity with regard to the data structure comprising the model (assuming discrete and finite state environments and finite time steps). We went back to first principles and questioned every assumption as we built a new framework for sequential data model, especially the nearly-ubiquitous assumption that all problems are best solved by some flavor of artificial neural network. Unnecessary complexity of methodology should also be avoided. We will show that our approach is direct, accurate, generalizable to unseen data when appropriate, and computationally scalable.

There are three categories of sequential data problems that we are proposing solutions to.

The first is a direct method for generalizing Markov Chains into a sequence-to-sequence modeling methodology with a learning mechanism that casts aside the Markov Property, but in a manner such that our data structure grows less-than-linearly and requires no expensive backpropagation of neural networks. While most of our focus on this problem assumes environments in which the states are all mutually orthogonal, there are ways to fold in embedding models that establish non-orthogonal relationships amongst the states of the system. This method converges exactly to the Markov Chain in the special case that only a single time step of history is used.

Secondly, we generalize the first methodology to “sequence-to-sequence modeling with state magnitudes”, where each instance of a system occupying one of multiple mutually orthogonal states is associated with some real-valued, measurable magnitude. In addition to prediction a sequence of states, we also predict the future magnitudes of those states given the history of the system.

![]()

Lastly, we also generalize our sequence-to-sequence modeling methodology to a direct generalization of the Markov Decision Process such that using any depth of state history is not only possible, but computationally viable. With our approach, we can model a wider class of problems in which the full state history of a system is relevant, including where rewards must be obtained in a particular order. This method converges exactly to the Markov Decision Process in the particular case where only a single time step of history is used.

Most importantly, the size of our model data structures for all three cases above increases less-than-linearly with the configured maximum allowed sequence length and less-than-linearly with the row count of the collected training dataset. The training process involves only tracking ordered-state histories and counts in a tree-like graph topology. Our software constructs these model structures optionally in either a python dictionary that can be saved to a JSON file or to a Neo4j graph database.

We are eager to share additional details about our methodology in future posts and to identify problems in science and industry that we can solve.